Eveince Self-driving hedge fund April 2022 Release

Dvelopment details and new features and performance improvements of February 1'st to April 27'th 2022

Deep dive in data

Our data team at Eveince has adopted a new development methodology to address previous challenges including exhaustive planning sessions and inflexibility due to long sprints. In the new development framework, data team domains are separated and assigned to a Direct Responsible Individual(DRI). Each domain has its own development plan and backlog. DRIs are responsible to maintain each domain's backlog with respect to data KPIs and business requirements. This structure allows the data team to focus on separate domains and refrain from redundant discussions for planning. Weekly iteration review sessions are utilized to regularly validate each domain’s progress, coordinate between domains, and prioritize tasks.

Executive summary

Our data team has contributed to the below domains of the Eveince fund pipeline since the last report:

Parameter Optimization:

Optimization service is developed and tested in coordination with online training.

Backtest experiments are not yet satisfactory for deployment.

Order Execution: Order execution training facilities are redesigned to address several limitations and improve coverage and performance.

Feature Space: Volume-based indicators and their associated normalization techniques are implemented and tested.

Direction algorithm (algo service)

Supported pairs are extended to 18 symbols.

A parameter tuning in a production led to a 25% increase in total trades and volume. EDA on labeling window size led to parameter tuning.

Bet sizing: A new bet sizing scheme is proposed to ensure coherent behavior across markets.

Market neutral strategy: A novel modeling scheme is proposed to implement group trading as a market neutral strategy

content generation: To introduce our technical competency and advantages, we have been focused on content generation in the form of blog posts. Two blog posts are currently finalized, with 7 more in the preparation stage.

Parameter optimization

White box analysis and EDA on the selection process, objectives, and parameters led to a robust optimization pipeline.

Parameters: we are currently optimizing the cluster period for the trend model. This setup addresses the feature space distortion in the previous pipeline.

Objective: a new objective-based on delta beta is proposed where the optimization can manage to improve model accuracy as well as portfolio performance. Outlier removal and online training are also implemented for robust objective calculation.

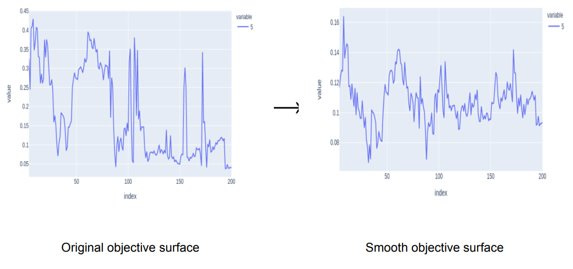

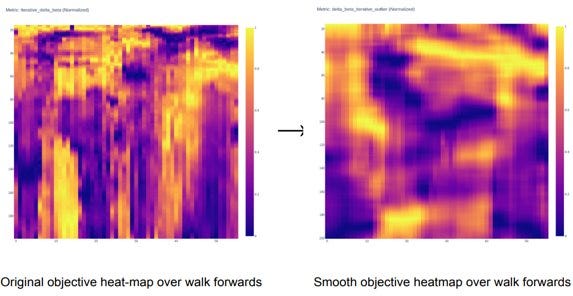

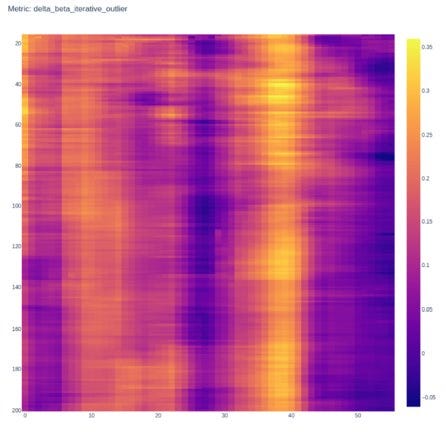

Parameter Selection: the main challenge in selection is a locality in terms of parameter values and walk forwards. Savgol filter and iterative metric calculation are employed to address these challenges.

Walk Forward: walk forward is the underlying concept in the optimization pipeline where data split for training, backtest, and inference is selected. We have redesigned this procedure to add overlap between optimization and training periods. This overlap resulted in robust parameter selection

EDA in parameter optimization objective led to a smooth and locally consistent objective space. As illustrated in the following figures, savgol filtering and iterative metric calculation resulted in a smooth selection surface, as well as a locally consistent space in terms of parameter values and walk forward iterations.

In the meantime, several backtest experiments have been undergoing to evaluate the optimization procedure. Optimization performance is not satisfactory yet, so the production migration will be delayed until we reach a robust procedure.

The parameter optimization service (for production) is also developed and integrated with online training. So as soon as reaching an acceptable performance, the production migration will be seamless.

Order execution

Order execution version 2 is being developed with two main improvements in mind:

Supporting multi markets with one model

Performance improvement.

A new match engine is developed using pandas data frames for order matching which leads to an order of magnitude improvement in execution during training

The new match engine and feature handler modules are integrated into the simulation environment

Multi-market and side support, feature extraction, and agent profiling are also added to the pipeline

We are now working on order book features and delayed rewards to improve execution performance

We plan to enter the training phase as soon as features and rewards are ready, after which the backtesting and production migration will be started

Order Execution Training Facilities V2

New order execution training facilities are developed with improvements mentioned before in mind.

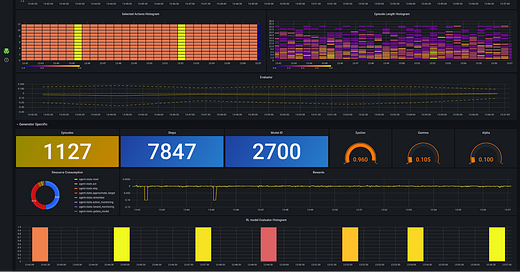

The new monitoring dashboard, which shed light on the improvements in convergence and speed in the new framework, is illustrated below.

Feature space

Normalization for volume-based indicators is implemented. Newly added indicators are:

Money Flow Index (MFI)

On Balance Volume (OBV)

Force Index (FI)

Volume Price Trend (VPT)

Positive Volume Index (PVI)

Negative Volume Index (NVI)

Accumulation/Distribution Index (ADI)

Chaikin Money Flow Index (CMF)

Backtest produced promising results for volume-based indicators

We are now working on refactoring the feature extraction pipeline to support volume-based indicators’ normalization. These indicators will be added to the production pipeline after coordination with our system team

Market behavior analytics

while exploring parameter effects in model performance, we faced scenarios in which no parameter value would result in an acceptable objective. In these situations, market behavior is unpredictable for the model regardless of the parameters. We can use these analytics to decide when to stop trading based on market situations.

Services

The labeling mechanism in our Algo Service was updated:

As discussed in the previous section, the labeling window length is updated in production. This change results in a 25% increase in the number of trades and consequently the total traded volume.

Backtest experiments approved the improvement in various metrics with this change, however, we need to address the fact that any increase in traded volume also increases the commission we are paying (which is another issue that needs to be addressed with exchange options).

Supported symbols

Adding 6 more symbols to our production services we are now supporting 18 pairs including BTC, ETH, ADA, BNB, LINK, MATIC, SOL, TRX, EOS, ETC, LTC, LUNA, NEO, XLM, XMR, XRP, DOT, FTM (all USDT quotes)

Optimization and Online Training Services

Optimization service is developed and tested for production. The online training service is also updated to work with optimization results. Overall, we don’t have any barriers to deploying optimization as soon as we are satisfied with optimization robustness.

Bet sizing

One crucial aspect of bet sizing is market normalization. More specifically, bet sizing needs to assess risk with respect to market behavior. Our current bet sizing algorithm relaxes this aspect and acts on a mean VaR value from participating markets to ensure a predefined level of risk.

A new modeling scheme is proposed to address this drawback, which is currently being backtested for validation.

The new scheme is capable of assessing risk based on each individual market and has the advantage of tangible explanation. Meaning that with this modeling one can specify the maximum loss they can afford on a daily basis, and the algorithm will set the VaR in a dynamic fashion to ensure with 99% confidence that there won’t be a loss greater than this value.

R&D on market neutral strategies

Performance metrics

in order to measure the performance of market-neutral strategies, we’ve surveyed available metrics. Conventional metrics alongside some strategy-specific methods can be utilized for evaluation. (report)

Spread Modeling

co-movement has been implemented as a pair spread model.

Criterion Tests

Several statistical tests are implemented to facilitate the pair selection and validation processes. These tests check for stationary properties, unpredictability, and mean-reverting behavior. (report)

Pair selection

based on previous statistical tests, an optimization process is implemented to extract a linear combination of a pair or group of assets for pair trading. (report)

Eveince modeling

we have introduced novel modeling for group trading based on partitioning logic in Eveince. This modeling is implemented and merged into “btrading” and core backtest. Although the strategy performance is very promising, we are not yet ready to publish the portfolio performance.

System Insights

Executive summary

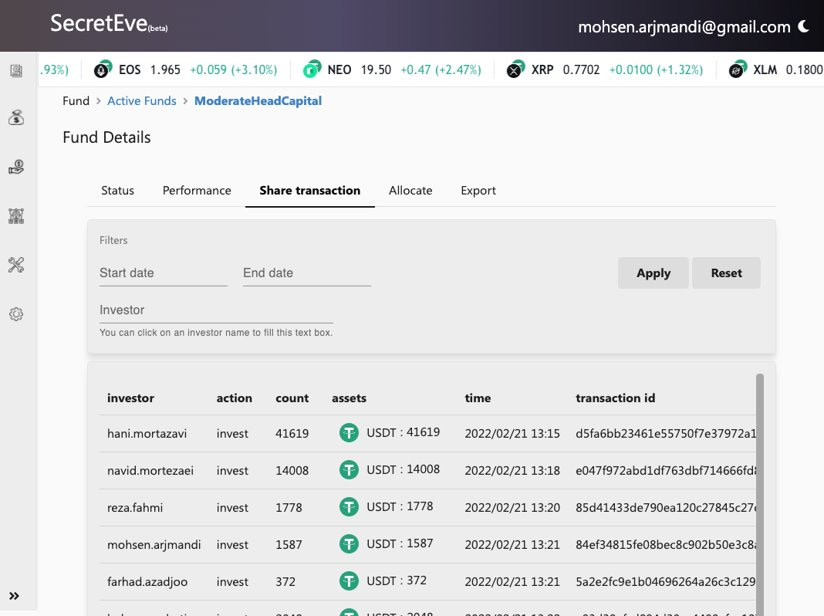

We have fully migrated old accounts and investors to the fund structure. Investors have their own account being managed by the fund manager, reports are migrated to show performance based on fund overall performance and providing NAVPS.

We also developed our first futures trading prototype and learned how to design the real system. Moreover, a dashboard was created for investors, where they can see their assets and related reports about performance and details of their assets. We also worked on designing a trading platform, a new structure for external integration of systems like algorithms.

While doing all that, maintenance and improvements in live systems were top priority and we had several maintenance and improvement releases for core services, reports, and front-end.

Overview of feature implementation and R&D

Understanding Binance's future market order processing workflow, to find corner cases and new required implementations

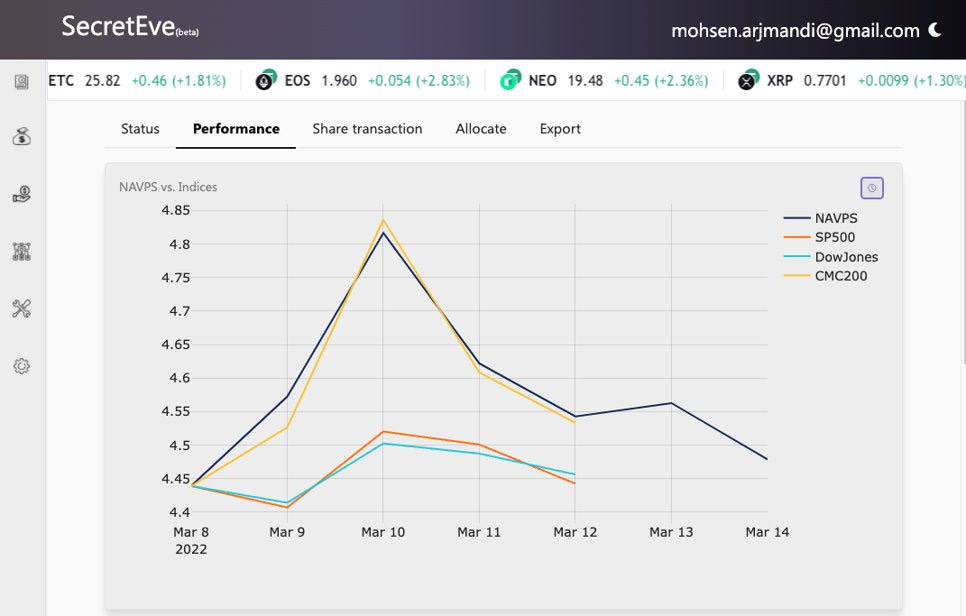

New feature: Comparing the fund NAVPS with determined indices as live Eveince performance

New feature: Export and download fund report

Reorganized UI sections to be more user friendly and coherent

A new design and a dedicated page for managing subscriptions with advanced options like batch update

Access to USDC markets for a fund manager

Many reliability improvements in trading and data providing Cloud migration planning and design

Futures prototype

Futures/Pair trading design

Investor dashboard

Investor reports

Design and planning for automated factsheet generation

Reliability improvements in reporter

Several improvements in reports

Automated trader unsubscription

Several improvements in the front-end

Performance improvements in front-end

Migrated domains for admin dashboard

Investigation for issues in algo service

launching Eveince site

We’ve also onboarded our new joined team member, Salar, and his initial contribution already started in the product

Migration to the fund structure

To migrate to the fund structure, it was required to cash out all money and allocate it to the fund with a NAV defined by the fund manager.

This was done by setting each share value as 1$ and all investors had been added to the new structure

Fund structure is a stand-alone service that based-on fund manager’s requirement can be used or not. A portfolio can be started and used without a fund structure. This is supported to have more simplified workflows available.

Real-time comparison of fund’s NAVPS vs selected market indices

We’ve added S&P500, Daw Jones index, and CMC200. other required indexes will be added too.

Note: Index data is not available during the weekend

New subscriptions UI exposing easy-to-use features for fund operations

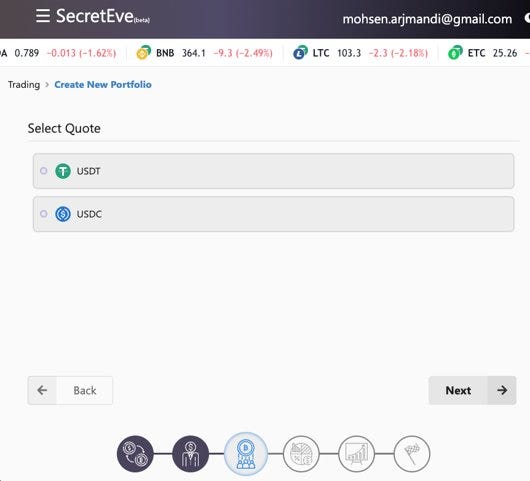

New stable coin and new navigation panel

The fund manager now has the ability to build a portfolio based on USDC. System design had this feature from the beginning to support any coin, in the previous release required configurations were added to the core and in this release, it’s ready to use for the fund manager. This feature enables us to use other markets and increase our AUM capacity.

New system and process for better collaboration between Data and System team

One of our challenges that had both technical and non-technical roots was maintaining our up-time while our data team was still responsible for the uptime of some services. To attack this challenge lots of development and planning had to be done:

Re-writing Data Service (previous report)

Defining new inter-team collaboration protocols

Defining solutions that best match current artifact delivery systems in place

Designing a new model-serving kit (in review)

Provide this new structure to our data team (TBD)

Implement current models in Algo-service, Bet-sizing, and order execution (TBD)