Order Placement: Problem Definition and Modeling

These series of blog posts are meant to provide an overview of the execution model developed at Eveince. In the first part of this series, we are going to describe order execution duties, provide formal problem definition and review the problem modeling done at Eveince.

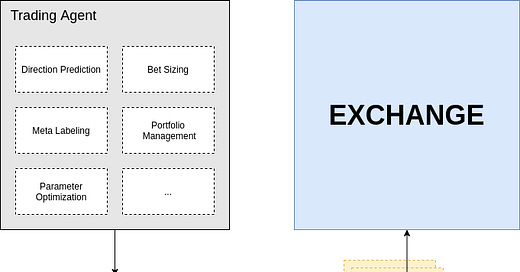

Several components should come together to form a comprehensive algorithmic trading platform. In such a platform, Trading policy, predictive models, risk factors and several other components work together to decide when to buy/sell a specific symbol and the target volume to execute. This decision is then converted into a set of orders to be sent to the exchange. Order execution is in charge of this conversion.

Order Execution Goals

One policy for order execution is placing market orders. This policy is the most naive way to execute your orders, neither the execution price nor the placement volume is optimized. On the other hand, a good execution policy considers the market conditions to select the best prices for execution. For instance, if you want to buy 1000$ worth of Ethereum, placing a market order might get you 15 ETH while using an optimized execution policy would result in buying 16 ETH. It’s obvious that optimized execution policy can lead to better profits. Also, since order execution splits the original order to smaller parts and places them through time, the market impact is minimized. For example, buying 1000 BTCs needs careful considerations because if you buy this amount of bitcoins in one order you would cause market fluctuations that can affect your trading agent.

Order execution receives a buy/sell signal associated with a target volume and produces a set of orders considering:

– Execute the original order with the best price possible

– Minimize the market impact by gradually executing the original order

Formal Problem Definition

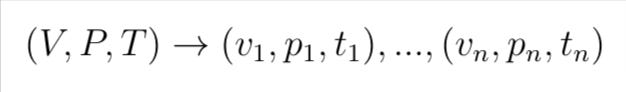

Given a buy/sell order with volume V and price P and expiration time T, specify the placement policy. More specifically:

Such That,

Placement Model as a black box

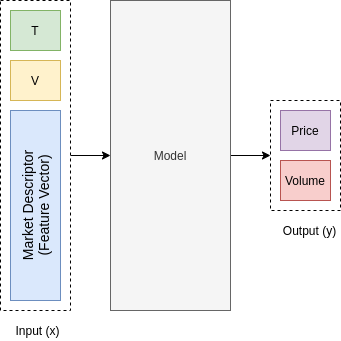

The order placement model is characterized as:

Input: The remaining time to execute and the remaining volume

Output: new order’s volume and price to send to the exchange

As a black box, the placement model should decide how to split the original order to smaller ones based on market data, e.g. candles, orderbook, etc. In our approach, the model decides how much of the volume should be executed next and what should be the price. To be more specific, consider the case where the trading agent has told us to buy 10 bitcoins in the next 4 hours. In this situation, the placement model is first called to generate the first order, which is, for instance, 1 bitcoin with price=10k$ for the next 10 minutes. Meaning that a limit order is sent to the exchange with volume=1 and price=10k$. Then after 10 minutes the placement model is called with the updated remaining time and volume. Now let’s say the placed order is partially filled and only half of the volume is fulfilled. Then the updated remaining volume is 9.5 and remaining time is 3 hours and 50 minutes. The placement model generates another order for this situation, and this scenario goes on until the original order is fulfilled or the expiration time has reached. Placement model is in charge of generating orders in a way that the original volume is fulfilled with the best price possible.

We’ve described the placement model as a black box. We know the input and the expected output. But how should we build such a model?

Modeling

As described in the previous section, the execution model is a function approximator which gets as input the market descriptors (feature vector) and generates as output the price and volume to be sent to the exchange. The abstract execution model is illustrated in the figure below.

Now what do we need to train such a model? If we had a dataset of feature vectors and associated price and volumes (labels), then we could use any supervised model to build the function approximator. The issue here is that we do not have such a dataset. We need to find a way to either train the model in an unsupervised manner or generate the dataset. Our approach is to use Reinforcement Learning.

In the next part of this series, we will cover the reinforcement learning approach used to build the execution model. Let’s have a sneak peak of the next part:

Behnam Sabeti, Chief Data Officer, Eveince.