Auxiliary Tasks for Reinforcement Learning based Order Placement

Improving Explain-ability, Stability and Performance in complex action spaces

In previous blog posts on Eveince Order Placement, we discussed a modeling approach using Reinforcement Learning and the required simulation environment to train such a model. While reinforcement learning provides a framework to deal with problems that include some sort of planning and policy optimization in them, it imposes interpret-ability and stability issues in the training process. These issues are more challenging in the case of complex action spaces like order placement. In this blog post, we lay out a framework to incorporate related tasks to boost the underlying RL algorithm's convergence rate and improve performance and explainability.

As described in a previous post, order placement training includes an agent interacting with a simulation environment and producing experiences for the learner to train on. The training experiences are constructed using a reward schema, e.g., implementation shortfall, which is the sole source of the learning signal.

The training algorithm needs to explore a huge action space to find the optimum execution policy using the reward provided by the environment. The reward function is also unstable since it includes an estimation of future rewards. Auxiliary tasks can be employed in this situation to provide related training signals and boost the training process.

Auxiliary Tasks

These are additional tasks embedded in the training procedure to provide additional training signals. Auxiliary tasks are learned simultaneously with the main RL goal, which leads to a more consistent learning signal. These tasks are leveraged to learn a shared representation of the environment and agent space, which speeds up the training progress as well as some other properties like pre-training that we will get to later.

An auxiliary task is an additional cost function that an RL agent can predict and observe from the environment in a self-supervised or supervised fashion. In the case of unlabeled inputs, losses are defined via surrogate annotations synthesized from raw input, while in the supervised case, actual labels are provided to compute each task’s loss.

Auxiliary tasks usually consist of estimating quantities that are relevant to solving the main RL problem. For example, in a maze-solving problem, as illustrated in the above image, one auxiliary task is to predict the number of pixels that are going to change with each action. These tasks may take the form of classification and regression algorithms or alternatively may maximize reinforcement learning objectives.

Order Placement Auxiliary Tasks

In the case of order placement, there are several tasks that would naturally provide a learning signal for the model. Consider a scenario where a person decides how to divide the parent order into small child orders. In this case, if the person knows about the future market price in different horizons or the future market volatility, she could make more informed decisions. We could use the same reasoning to define several auxiliary tasks trained simultaneously with the main RL goal. These auxiliary tasks include:

Future Market Price Direction is formulated as a classification problem. Several time horizons can also be used to provide fine-grained price predictions into the future.

Future Market Volatility can be formulated as a classification or regression task.

It’s worth noting that these auxiliary tasks can also be included as state space features which are externally estimated and included in the feature space. However, we can also include them in the main model and use the predictions as part of the final representation of the agent state. The choice of labeling mechanism, time horizon, outlier detection, and all related concepts for any classic classification or regression problem should be considered and carefully designed too, which is beyond the scope of this blog post and will be discussed in a separate article.

Order Placement DQN Architecture with Auxiliary Tasks

As described before, auxiliary tasks are employed to learn a shared representation of the environment, which is then used to optimize the main RL goal. The network architecture, including representation learning layers, auxiliary tasks, and q value estimation layers, is illustrated in the figure below. Please note that this figure is meant to provide an overview of the desired architecture, while the choice of layers and activations is more of an art and needs the experience to be set.

In the training phase of a multi-head model, we need to provide labels for each head so that the loss function can be computed and backpropagated. It’s worth noting that pre-computing the labels for all episodes and using a look-up table saves you a ton of time and computing.

Loss Definition

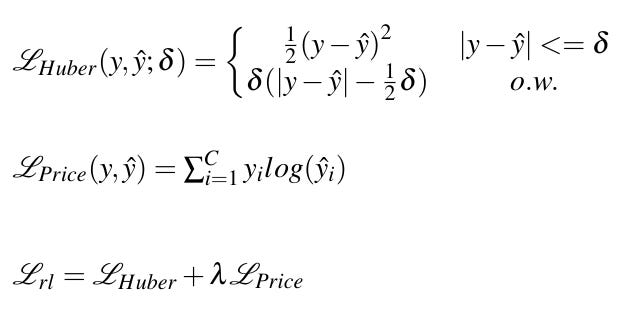

The choice of loss function depends on the auxiliary tasks you used. For example, we consider a case where we have one market price direction prediction auxiliary task alongside the main DQN Huber loss. The loss function would be:

One major aspect of multi-objective loss definition is the weighting schema. In the case of auxiliary tasks, we need to be careful not to set big weights for auxiliary losses since the calculated gradient would be influenced too much by the auxiliary loss, and the main goal is overshadowed. It’s also useful to have dynamic weights where higher weights are used for auxiliary tasks to provide learning signals in the first rounds of training. As the model is trained, the loss weight is decreased to put more attention to the main goal.

One nice property of using auxiliary tasks in RL algorithms is the ability to pre-train the model prior to starting training for the main goal. This would initialize the model weights based on related concepts, leading to enriched embedding space for the main goal optimization.

Using auxiliary tasks also helps model interpretability. One major challenge in deep reinforcement learning algorithms is to interpret and explain the model decisions. However, when auxiliary tasks are used in training, one can study the relation between model decisions and auxiliary labels. For instance, we expect the model to place aggressive orders when it estimates an opposite price movement relative to the order.

To sum up, using auxiliary tasks for reinforcement learning based order placement provides:

more stable learning signal

faster convergence

pre-training capability

an interpretation of the model decisions

In this blog post, we went through the approach implemented in the Eveince order placement model to employ auxiliary tasks. The approach provides a more stable learning signal alongside some other properties like faster convergence and interoperability. In the upcoming posts, we will cover the results of this approach and practical tips and tricks, and implementation details.